EvidentlyAI

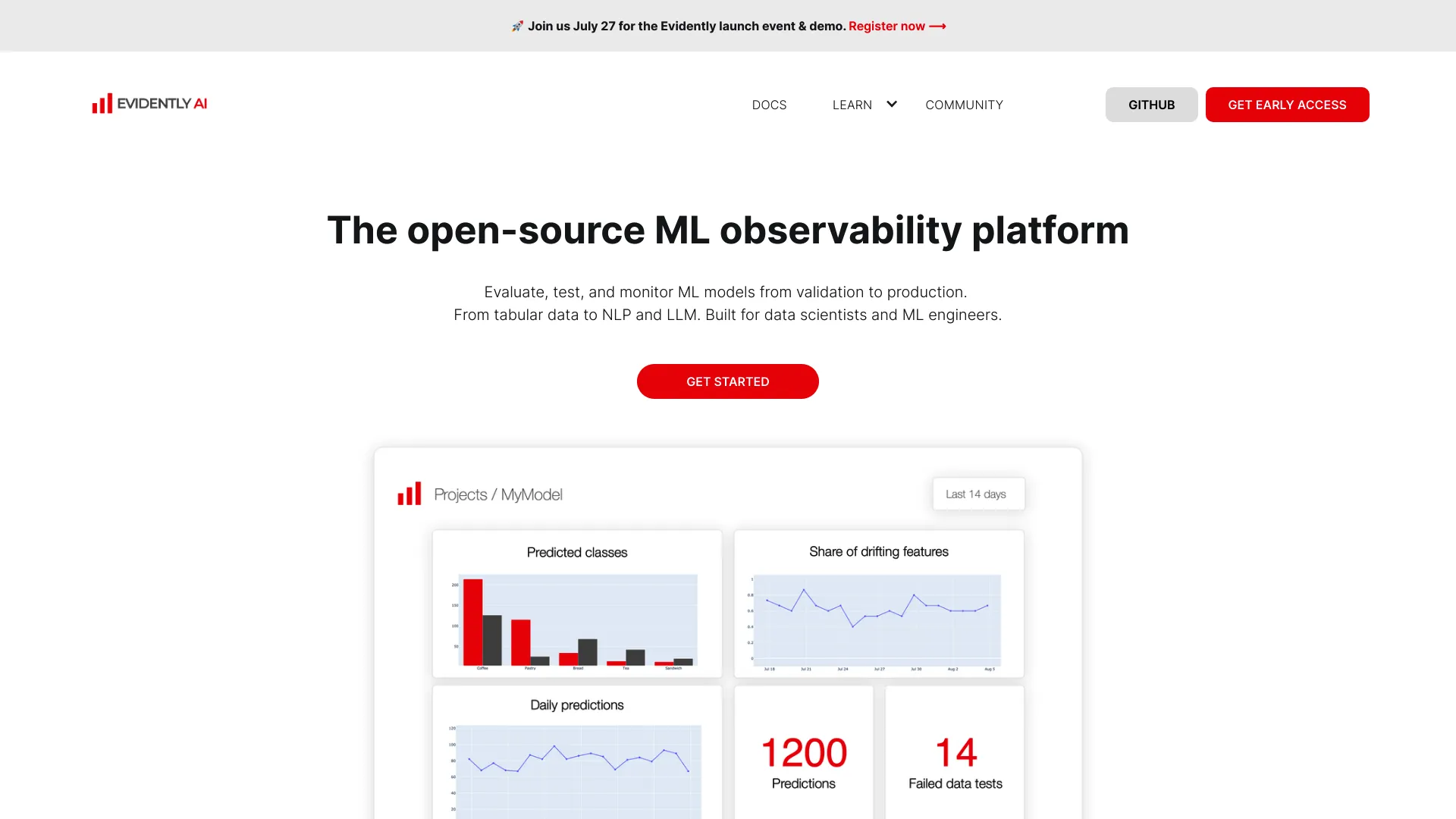

AI Model Monitoring Tool

Effortlessly monitor, evaluate, and test your AI models with Evidently AI – the go-to AI model monitoring tool.

What does EvidentlyAI do?

What is Evidently AI and what does it do?

Evidently AI is an evaluation, testing, and observability platform for AI-powered products. Built on top of the open‑source Evidently project, it provides a library of 100+ built‑in metrics, automated evaluation, synthetic data generation, and continuous testing. It helps you assess input and output quality, monitor data drift, and generate clear, shareable reports that prove your AI system is ready across updates.

How many built-in metrics does Evidently AI offer, and can I add custom metrics?

Evidently AI offers 100+ built‑in metrics. You can also add custom metrics and evaluations, combining rules, classifiers, and LLM-based evaluations to tailor the quality system to your use case.

How does Evidently AI help with tracking data drift and model performance?

Evidently AI tracks data drift and model performance over time, monitoring changes in inputs and outputs. It supports detecting drift across text, tabular data, and embeddings and provides a live dashboard to surface drift, regressions, and emerging risks early.

Is Evidently AI open-source and how can I use it?

Yes. Evidently AI is built on the open-source Evidently framework. You can try the open-source edition and self-host it, or use the cloud version for a hosted experience. The platform is designed to be open and extensible.

What data types does Evidently AI support?

Evidently AI supports tabular data, text data, and embeddings. It also provides capabilities relevant to retrieval and text workflows, such as RAG evaluation.

What are the main use cases for Evidently AI?

- Adversarial testing: probe your AI system for PII leaks, jailbreaks, and harmful content

- RAG evaluation: test retrieval accuracy and reduce hallucinations in retrieval-augmented pipelines

- AI agents: validate multi-step reasoning, tool use, and workflows

- Predictive systems: monitor classifiers, summarizers, recommenders, and traditional ML models

What outputs or reports does Evidently AI produce?

Evidently AI generates visual HTML reports and ML model cards. It offers shareable evaluation results and the ability to log outputs for further analysis or visualization, showing exactly where and how the AI system may break.

How can teams collaborate using Evidently AI?

Evidently AI is designed for collaboration among engineers, product managers, and domain experts. It supports a web interface and an API to run checks programmatically, and it makes it easy to share evaluation results and examples across teams.

What does Evidently AI cost, and what are the pricing options?

- Open-Source Version: Free to use, but self-hosted

- Cloud Version: Starts at approximately $100 per month, offering a ready-to-use web app with pre-built monitoring tabs, alerting integrations, and email support

Does Evidently AI offer alerts or monitoring automation?

The cloud version includes alerting integrations. This enables alerting within the cloud offering, while the open-source/self-hosted option focuses on evaluation and monitoring capabilities without built-in cloud alerting by default.

Is Evidently AI suitable for production monitoring?

Yes. Evidently AI is designed for continuous testing and AI observability, providing a live dashboard to track drift, regressions, and emerging risks across updates, making it suitable for production monitoring.

How do I get started with Evidently AI?

You can start by trying the open-source edition, or book a personalized demo. You can also explore the docs, tutorials, guides, and join the community for hands-on help.

Where can I get help or join the community?

Join the Evidently community through the Discord server, and leverage the documentation, tutorials, guides, and courses available on the site for guidance and support.

What outputs can be logged or exported for analysis?

You can log evaluation outputs and generate shareable reports. Visual HTML reports and ML model cards are part of the standard outputs, helping you document and analyze model performance over time.

Are there limitations or caveats to using Evidently AI?

Yes. Known limitations include:

- Limited data types (primarily tabular and text data)

- Dependence on the Pandas ecosystem for data processing

- Some workflows may require manual intervention rather than automated actions

- Alerts/automatic remediation are primarily available in the cloud offering through alerting integrations

What is RAG evaluation in Evidently AI?

RAG evaluation focuses on preventing hallucinations and testing retrieval accuracy in retrieval-augmented generation pipelines and chatbots.

What is Adversarial testing in Evidently AI?

Adversarial testing lets you probe your AI system for vulnerabilities such as PII leaks, jailbreaks, and harmful content before others do.

What formats of outputs can I expect to share with stakeholders?

You’ll get clear, shareable evaluation results and reports (visual HTML reports and ML model cards) that show where the AI system breaks and how it can be improved, down to individual responses.

How many organizations or users can use Evidently AI in a single setup?

The platform supports collaboration across teams and organizations, with options for role-based access and multiple users, especially in the cloud offering.

Can Evidently AI handle multiple prompts, models, or evaluation rules?

Yes. You can design your own AI quality system using the library of built-in metrics or add custom ones, and you can apply them across different prompts, models, or evaluation rules.